Featured Articles

Hidden in Plain Sight

Write-up of our participation in the Powers of Tau secure multi-party compute ceremony.

🎲📹☂️→🏨💻☯️→🔨🔥💥

On Cryptographic Backdoors

Why cryptographic backdoors, or 'secure golden keys', are a bad and unnecessary idea, even for law enforcement.

Storing Passwords Securely

Why "SHA 256-bits enterprise-grade password encryption" is only slightly better than storing passwords in plain text, and better ways to do it.

Security Through Obscurity

Why I think the increasingly popular 'That's not security. That's obscurity.' attitude is unhelpful.

Most Recent Articles

Hidden in Plain Sight

Background

Powers of Tau is the latest Secure Multi-Party Computation protocol being used by the Zcash Foundation to generate the parameters needed to secure the Zcash cryptocurrency and its underlying technology in the future.

People from all over the world collectively generating these parameters is called the ceremony. As long as just one participant in the ceremony acts honestly and avoids having their private key compromised, the final parameters will be secure.

The below is a write-up of Amber Baldet and my own participation in that ceremony, with Robert Hackett of Fortune Magazine observing parts of the process.

Warning: The below contains fire.

The Theme of Our Participation

We live in New York City, one of the most surveilled and crowded places in the world. While others who have participated in the ceremony made explicit attempts to escape civilization and avoid surveillance, we thought it would be interesting to leverage the lack of privacy and the general inability to do things in secret in a large city in a way that nevertheless contributes to the creation of a technology that does give people back privacy.

As part of our participation, we wanted to sample the people of New York’s thoughts on personal privacy, and extract information from public places in a way that, even if somebody were following along and watching, our computation still had only a small chance of being compromised. In particular, we employed operational security tools such as only making cash payments, and making movements unpredictable. We also sought to prevent a possible adversary from:

- being able to deduce our plan

- being able to predict what items we will buy and where

- being able to continue following us, if they are

- discovering where we will perform the computation in time to deploy advanced attacks that don’t require physical access to our hardware

We did accept that doing this in NYC would make it nearly impossible to resist the active efforts of law enforcement. We did not attempt to thwart, nor did we notice, such an effort.

We dubbed this plan “Hidden in Plain Sight”.

Gathering Supplies

We begin on the morning of March 15th, 2018. Amber has bought:

- Several different kinds of dice

- Play-Doh to stuff into unused ports of hardware we will buy later

- A blow torch nozzle and two propane tanks to burn things

- A mallet to smash things

- Two large umbrellas even though it’s a beautiful sunny day

- Adhesive labels to make physical tampering of hardware and doors evident

We unpack the dice and pull up a list of electronics stores in Manhattan. Without clicking any of the options, which would reveal our choices to Google, we each roll dice to determine two stores to go to. Amber doesn’t tell me where she is going, and I don’t tell her. We turn off our phones and embark.

(We powered on our phones in flight mode a couple of times to take pictures.)

At each of the electronics stores, we’re looking for:

- A portable video camera similar to a GoPro

- A MicroSD memory card for the camera

- An SD card reader

We roll dice to determine both which of the available camera and SD card options to pick from, and then again to determine which of the items on the shelf to buy. The store employees don’t seem to care about our behavior–just another day in New York, I guess.

Meanwhile, Amber meets up with Robert Hackett and goes to a print shop where she selects via dice roll a workstation on which to type up a note containing the note “Thank you for participating! You can learn more about the project here:” and a QR code linking to the Powers of Tau page.

We rendezvous again in Union Square. Over lunch at a randomly selected location, we unpack and charge the cameras, making sure that they are within sight at all times.

Gathering Entropy

Good toxic waste for use in a Powers of Tau computation contains a high amount of randomness, or entropy. We can’t rely on the entropy collectors in computers–what if they’re all broken? So the first task at hand is collecting randomness that an adversary would have to spend a very large amount of money to replicate.

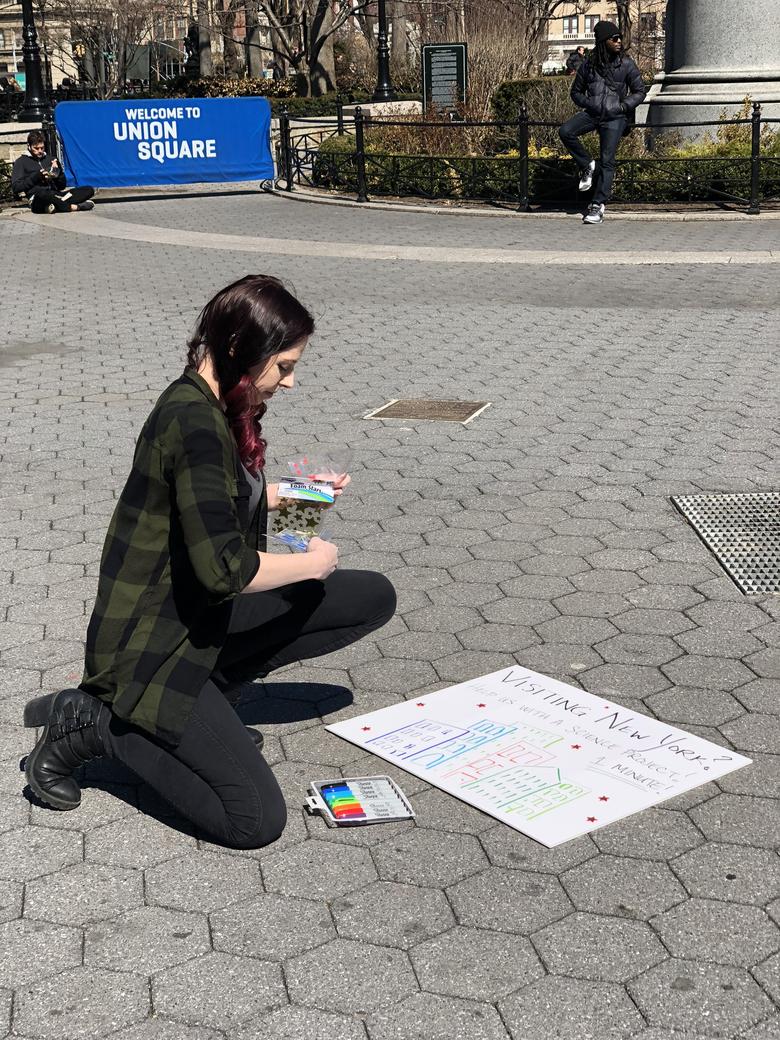

Amber prepares a sign intended to pique people’s curiosity.

I turn on the first camera, and we both pop open our umbrellas. The umbrellas are intended to obscure the view of any eyes in the sky.

I hit record, and Amber starts approaching strangers.

A couple from Canada is curious why we have umbrellas out when it’s sunny, and we sit down next to them.

During the conversation, Amber asks, “How do you feel about the statement ‘If you haven’t done anything wrong, you’ve got nothing to hide’?”.

The man replies, “I would say that that’s accurate.”

“That’s accurate?”, Amber asks, seeking confirmation.

“Absolutely,” he says.

We go over to two men sitting on a metal fence surrounding the shrubbery. After a bit of small talk, Amber again poses the question, “What do you think about the statement ‘If you’re not doing anything wrong, you’ve got nothing to hide’?”

The man shakes his head before pronouncing, “That’s complete, complete bullshit. The government will always spy on what you’re doing if they want to. And what you’re doing that’s not wrong today may be wrong tomorrow. What you think isn’t wrong they might think is wrong.”

Our last conversation in Union Square is with a guy who’s had just about enough of his friend talking about cryptocurrencies. “My friend talks about so many different ones. I can’t even remember any of them.”

“Let me just put down my spliff here,” he says as he realizes we’re recording.

Amber asks if he’s heard that Bitcoin is anonymous and private.

“Yes, I have,” he says.

“Do you think that’s true?”, she asks.

“No, I don’t,” he replies.

We get on the 6 train toward Grand Central. Moving quickly through the underground tunnels of the city in a metal tube, I again start recording. Even though I’m only recording people’s feet, a local New Yorker is not impressed. “Get a picture for your ass!”, she yells.

Walking through the tunnels of Grand Central, we encounter a group of Hare Krishna monks playing music. I hit record and we stop to talk to one of them.

“Have you ever heard of Bitcoin?”, Amber asks.

“Yes”, he says, exasperatedly, seemingly unimpressed by the cryptocurrency trend.

A little later, Amber asks him, “What’s the greatest secret?”

He replies, “Everyone is dying around you, and yet everyone thinks it’s not gonna happen to them. How to figure out the solutions to birth, death, disease and belief, these are the real questions, not how many Bitcoins do I have in my imaginary whatever account.”

I thank him for his time. As we turn toward the S train to Times Square, Amber asks offhand if he knows the current price of Bitcoin. “I dunno,” he says, “about ten thousand?”

As we get out of the subway station at Times Square, I pull out the other camera and hit record, capturing little bits of a heightened police presence, thousands of visitors to the city, and, of course, Elmo. We make an explicit decision not to approach Elmo, as getting in a fistfight may compromise our mission.

On the red stairs in the center of Times Square, we again open our umbrellas and have another few conversations.

Preparing for the Computation

Now that we’ve collected a sufficient amount of information that would be hard to replicate, we seal up the cameras and put them away. Next up is obtaining the hardware that will be used to perform the computation.

We set out towards a random electronics store.

Once at the store, we again roll dice to determine a laptop to buy, and which of the boxes to choose. We end up with a Celeron-powered Lenovo laptop with 2GB RAM and 32GB disk space.

(We tried to find a laptop with an ARM processor, a hardware configuration that hasn’t been used very much in the ceremony, but the store had none.)

The sales representative asks us what we’re going to use the laptop for.

“Doing one thing and then destroying it,” I reply.

“Oh, ok. Would you like to add extended warranty?” he continues.

I politely decline.

Performing the Computation

We select a hotel by dice roll and go there to get a room for one night. That we’re three people with several umbrellas, a bunch of unopened electronics, and no luggage doesn’t faze the hotel staff.

“Can we get a room facing toward the back?” I ask.

“Yes, this is in the back” the attendant replies.

For once I am excited to hear this.

To complete check-in I’m forced to provide identification and a credit card, compromising our position without necessarily revealing which room we’re in. (Being a real spy with a fake ID would’ve helped here.)

We get to the room and turn on the white noise generator conveniently installed in the window. We unbox the laptop and immediately install:

- Ubuntu 16.04.4 - SHA256:

3380883b70108793ae589a249cccec88c9ac3106981f995962469744c3cbd46d - Go 1.10 - SHA256:

b5a64335f1490277b585832d1f6c7f8c6c11206cba5cd3f771dcb87b98ad1a33 - The zip file of Filippo Valsorda’s alternative Go implementation of the Powers of Tau algorithm at commit

e2af113817477d26e6155f1aae478d3cb58d62c5- SHA256:fc473838d4dcc6256db6d90b904f354f1ef85dd6615cca704e13cfdd544316fe

During the OS installation the disk is encrypted using LUKS/dm-crypt using a very long, random password that is written down and later destroyed.

We make sure the laptop’s radio transmitters, like Wi-Fi and Bluetooth, are disabled. Then we copy the video files from both cameras to the hard drive and split them into one-megabyte chunks. We then randomly select a sequence of these chunks using /dev/urandom, and feed that sequence of video files into a hash function, producing some of our toxic waste.

We modify the taucompute source code to use a different cryptographically secure random number generator than the /dev/urandom one, seed it with the toxic waste, some random data from the system, a series of dice rolls, and some confidential inputs from both Amber and myself, and start the computation.

(These rolls were not used.)

The laptop is pretty poor, and the computation ends up taking much longer than expected. (We had been hoping to run it a number of times to increase the odds that a compromise would be revealed.) Robert and Amber both head out, and I wait for the computation to complete, wondering a couple of times if somebody is blasting the room with radiation to try to extract the toxic waste.

(This kind of attack as well as electromagnetic analysis isn’t something we explicitly tried to guard against other than by making our location hard to predict.)

At around 2 AM the computation completes. I copy from the laptop the response file along with its BLAKE2b checksum:

The BLAKE2b hash of `./response` is:

3eee603c e0feaaf9 47655238 7636462b

56bcba97 2b0e4b79 513b6ffc 8ba323d1

8574c79c ab756800 cae43440 6fa99eca

8445da3d e20b9af7 665325ff 89993df8After disconnecting power from the laptop (thus requiring re-entry of the encryption password), I seal up the laptop and memory cards used, and head home. Once at home, I seal up the doors and hide the hardware deep in my closet, in a place that could not be reached without waking me up.

(At the time of writing, it does not feel like I was drugged.)

Destroying the Secrets

The next day, Amber, Robert and myself meet up again, this time on Amber’s rooftop. Armed with propane and a blow torch nozzle, a mallet, and a fire extinguisher (just in case), we incinerate and smash the memory cards and laptop.

After a good deal of catharsis and minimal inhalation of plastic fumes, we are done: We did our part of the Powers of Tau ceremony, and were reminded to not take life for granted along the way.

Hopefully nobody was able to extract our toxic waste before we destroyed it!

Many thanks to Jason Davies for coordinating our participation, and to the whole Zcash team for creating a solid technology that genuinely benefits humanity.

Why Nobody Understands Blockchain

One of the biggest problem with blockchain (“crypto”) seems to be that nobody really understands it. We’ve all heard the explanation that you have blocks, and transactions go into blocks, and they’re signed with signatures, and then somebody mines it and then somehow there’s a new kind of money…? My friend says so, anyway.

I DONT UNDERSTAND BITCOINpic.twitter.com/nqZLI9eHHX

— Coolman Coffeedan (@coolcoffeedan) January 3, 2018

Why can’t anyone give a clear explanation of what it is?

Blockchain is difficult to understand because it isn’t one thing, but rather pieces of knowledge from a wide variety of subjects across many different disciplines–not only computer science, but economics, finance, and politics as well–that go by the name “blockchain”.

To demonstrate this, I (with help from others) compiled a (non-Merkle) tree of the subjects one needs to grasp, at least superficially, to (begin to) fully “understand blockchain”:

- Computer Science

- Algorithms

- Tree and graph traversals

- Optimizations

- Rate-limiting / backoff

- Scheduling

- Serialization

- Compilers

- Lambda calculus

- Parsers

- Turing machines and Turing completeness

- Virtual machines

- Computational Complexity

- Halting problem

- Solvability

- Data Structures

- Bloom filters

- Databases

- Key-value stores

- Tries, radix trees, and hash (Merkle) Patricia tries

- Distributed Systems

- Byzantine generals problem

- Consensus

- Adversarial consensus

- Byzantine fault tolerant consensus

- Liveness and other properties

- Distributed databases

- Sharding

- Sharding in adversarial settings

- Peer-to-peer

- Peer-to-peer in adversarial settings

- Formal methods

- Correctness proofs

- Information security

- Anonymity

- Correlation of metadata

- Minimal/selective disclosure of personal information

- Tumblers

- Fuzzing

- Operational security (OPSEC)

- Hardware wallets

- Passwords and passphrases

- Risk analysis

- Threat modeling

- Anonymity

- Programming languages

- C / C++ (Bitcoin)

- Go (Ethereum)

- JavaScript (web3)

- Solidity (Ethereum)

- Programming paradigms

- Functional programming

- Imperative programming

- Object-oriented programming

- Algorithms

- Cryptography

- Knowing which primitives to use and when

- Knowing enough not to implement your own crypto

- Symmetric ciphers

- Asymmetric

- Public/private key

- Elliptic curves

- Hash functions

- Collisions

- Preimages

- Stretching

- Secure Multi-party Computation

- Signature schemes

- BIP32 wallets

- Multisig

- Schnorr

- Shamir secret sharing

- Ring signatures

- Pedersen commitments

- zk-SNARKS

- Implications of quantum algorithms

- Oracles

- Economics

- Behavioral Economics

- Fiscal Policy

- Game Theory

- Incentives

- Monetary Policy

- Finance

- Bearer assets

- Collateral

- Compression

- Custody

- Debt

- Derivatives

- Double-entry accounting

- Escrow

- Fixed income

- Fungibility

- Futures

- Hedging

- Market manipulation

- Netting

- Pegging

- Securities

- Settlement Finality

- Shorts

- Swaps

- Mathematics

- Graph Theory

- Information Theory

- Entropy

- Randomness

- Number Theory

- Probability Theory

- Statistics

- Politics

- Geopolitics

- National cryptography suites

- Regulations

- Export controls / Wassenaar Arrangement

- Sanctions

- Tax systems

- Geopolitics

Blockchain understanding lies between the Dunning-Kruger effect and Imposter Syndrome: If you think you understand it, you don’t. If you think you don’t because there are still parts you don’t get, you probably understand it better than most.

Thanks to Amber Baldet for helping to compile this list.

Think something should be added? Let me know.

Quorum and Constellation

Two projects I’ve been working on for the past year:

Quorum, written in Go, is a fork of Ethereum (geth) developed in partnership with Ethlab (Jeff Wilcke, Bas van Kervel, et al) with some major changes:

Transactions can be marked private. Private transactions go on the same public blockchain, but contain nothing but a SHA-3-512 digest of encrypted payloads that were exchanged between the relevant peers. Only the peers who have and can decrypt that payload will execute the real contracts of the transaction, while others will ignore it. This achieves the interesting property that transaction contents are still sealed in the blockchain’s history, but are known only to the relevant peers.

Because not every peer executes every private transaction, a separate, private state Patricia Merkle trie was added. Mutations caused by private transactions affect the state in the private trie, while public transactions affect the public trie. The public state continues to be validated as expected, but the private state has no validation. (In the future, we’d like to have the peers verify shared private contract states.)

Two new consensus algorithms were added to supplant Proof-of-Work (PoW): QuorumChain, developed by Ethlab: a customizable, smart contract-based voting mechanism; as well as a Raft-based consensus mechanism, developed by Brian Schroeder and Joel Burget, which is based on the Raft implementation in etcd. (Neither of these are hardened/byzantine fault tolerant, but we hope to add a BFT alternative soon.)

Peering and synchronization between nodes can be restricted to a known list of peers.

Constellation, written in Haskell, is a daemon which implements a simple peer-to-peer network. Nodes on the network are each the authoritative destination for some number of public keys.

When users of another node’s API request to send a payload (raw bytestring) to some of the public keys known to the network, the node will:

- Encrypt the payload in a manner similar to PGP, but using NaCl’s

secretbox/authenticated encryption - Encrypt the MK for the secretbox for each recipient public key using

box - Transmit the ciphertext to the receiving node(s)

- Return the 512-bit SHA3 digest of that ciphertext

In the case of Quorum, the payload is the original transaction bytecode, and the digest, which becomes the contents of the transaction that goes on the public chain, represents its ciphertext.

A succinct description of Constellation might be “a network of nodes that individually are MTAs, and together form a keyserver; the network allows you to send an ‘NaCl-PGP’-encrypted envelope to anyone listed in the keyserver’s list of public keys.”

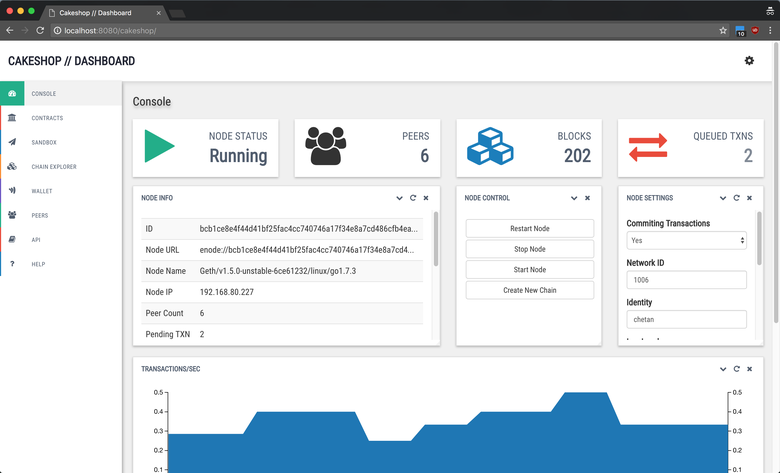

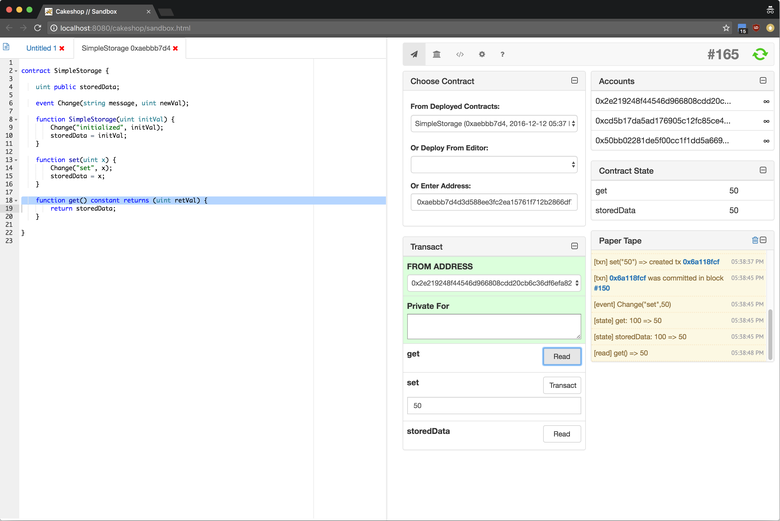

Finally, I’d be remiss if I didn’t mention Cakeshop, an IDE for Ethereum and Quorum developed by Chetan Sarva and Felix Shnir:

Cakeshop is a set of tools and APIs for working with Ethereum-like ledgers, packaged as a Java web application archive (WAR) that gets you up and running in under 60 seconds.

Included in the package is the geth Ethereum server, a Solidity compiler and all dependencies.

It provides tools for managing a local blockchain node, setting up clusters, exploring the state of the chain, and working with contracts.

If you’re interested in being a part of these projects, please jump in!

On Cryptographic Backdoors

In 1883, the Dutch cryptographer Auguste Kerckhoffs outlined his requirements for a cryptographic algorithm (a cipher) to be considered secure in the French Journal of Military Science. Perhaps the most famous of these requirements is this: “It [the cipher] must not be required to be secret, and must be able to fall into the hands of the enemy without inconvenience;”

Today, this axiom is widely regarded by most of the world’s cryptographers as a basic requirement for security: Whatever happens, the security of a cryptographic algorithm must rely on the key, not on the design of the algorithm itself remaining secret. Even if an adversary discovers all there is to know about the algorithm, it must not be feasible to decrypt encrypted data (the ciphertext) without obtaining the encryption key.

(This doesn’t mean that encrypting something sensitive with a secure cipher is always safe—the strongest cipher in the world won’t protect you if a piece of malware on your machine scoops up your encryption key when you’re encrypting your sensitive information—but it does mean that it will be computationally infeasible for somebody who later obtains your ciphertext to retrieve your original data unless they have the encryption key. In the world of cryptography, “computationally infeasible” is much more serious than it sounds: Given any number of computers as we understand them today, an adversary must not be able to reverse the ciphertext without having the key, not just for the near future, but until long after the Sun has swallowed our planet and exploded, and humanity, hopefully, has journeyed to the stars.)

This undesirable act of keeping a design detail secret and hoping no bad guys will figure it out is better known as “security through obscurity,” and though this phrase is often misused in criticisms of non-cryptosystems (in which secrecy can be beneficial to security,) it is as important and poignant for crypto now as it was in the nineteenth century.

A Dutchman Rolling Over In His Grave

These days, criminals are increasingly using cryptography to hide their tracks and get away with heinous crimes (think child exploitation and human trafficking, not crimes that perhaps shouldn’t be crimes, a complex discussion that is beyond the scope of this article.) Most people, including myself, agree that cryptography aiding these crimes is horrible. So how do we stop it?

A popular (and understandable) suggestion is to mandate that ciphers follow a new rule: “The ciphertext must be decryptable with the key, but also with another, ‘special’ key that is known only to law enforcement.” This “special key” has also been referred to as “a secure golden key.”

It rolls off the tongue nicely, right? It’s secure. It’s golden. What are we waiting for? Let’s do it.

Here’s the thing: A secure golden key is neither secure nor golden. It is a backdoor. At best, it is a severe security vulnerability—and it affects everyone, good and bad. To understand why, let’s look at two hypothetical examples:

Example A: A team of cryptographers design a cipher “Foo” that appears secure and withstands intense scrutiny over a long period of time. When there is a consensus that there are no problems with this cipher, it is put into use, in browsers, in banking apps, and so on.

(This was the course of events for virtually all ciphers that you are using every day!)

Ten years later, it is discovered that the cipher is actually vulnerable. There is a “shortcut” which allows an attacker to reverse a ciphertext even if they don’t have the encryption key, and long before humans are visiting family in other solar systems. (This shortcut is usually theoretical or understood to not weaken the cipher enough to pose an immediate threat, but the reaction is the same:) Confidence in the cipher is lost, and the risk of somebody discovering how to exploit the vulnerability is too great. The cipher is deemed insecure, and the process starts over…

Example B: After the failure of “Foo,” the team of cryptographers get together again to design a new cipher, “Bar”, which employs much more advanced techniques, and appears secure even given our improved understanding of cryptography. A few years prior, however, a law was passed that mandates that the cryptographers add a way for law enforcement to decrypt the ciphertext long before everyone has a personal space pod that can travel near light speed. A “shortcut” if you will, that will allow egregious crimes to be solved in a few days or weeks instead of billions of years.

The cryptographers design Bar in such a way that only the encryption key can decrypt the ciphertext, but they also design a special and secret program, GoldBar, which allows law enforcement to decrypt any Bar ciphertext, no matter what key was used, in just a few days, and using modest computing resources.

The cipher is put into use, in browsers and banking apps, and…

…

See the problem?

That’s right. Bar violates Kerckhoffs’ principle. It has a clever, intentional, and secret vulnerability that is exploited by the GoldBar program, the “secure golden key.” GoldBar must be kept secret, and so must the knowledge of the vulnerability in Bar.

Bar, like the first cipher Foo, is not computationally infeasible to reverse without the key, and therefore is not secure—only this time, this is known to the designers before the cipher is even used! And not only that: Bar’s vulnerability isn’t theoretical, but extremely practical. That’s the whole point!

Here’s the problem with “practical:” For GoldBar to be useful, it must be accessible to law enforcement. Even if it is never abused in any way by any law enforcement officer, “accessible to law enforcement” really means “accessible to anyone who has access to any machine or device GoldBar is stored or runs on.”

No individual, company, or government agency knows how to securely manage programs like GoldBar and their accompanying documentation. It is not a question of “if,” but “when” a system storing it (or a human using that system) is compromised. Like many other cyberattacks, it may go unnoticed for years. Unknown and untrusted actors—perhaps even everyone, if Bar’s secrets are leaked publicly—will have full access to all communication “secured” by Bar. That’s your communication, and my communication. It’s all secure files, chats, online banking sessions, medical records, and virtually all other sensitive information imaginable associated with every person who thought they were doing something securely, including information belonging to people who have never committed any crime. There is no fix—once the secret is out, everything is compromised. There is no way to “patch” the vulnerability quickly and avoid disaster: Anyone who has any ciphertext encrypted using Bar will be able to decrypt it using GoldBar, forever. We can only design a new cipher and use that to protect our future information.

Enforcing the Law Without Compromising Everyone

Here’s the good news: We don’t need crypto backdoors/“secure golden keys.” There are many ways to get around strong cryptography that don’t compromise the security of everyone—for example:

Strong cryptography does not prevent a judge from issuing a subpoena forcing a suspect to hand over their encryption key.

Strong cryptography does not prevent a person from being charged with contempt of court for failing to comply with a subpoena.

Strong cryptography does not prevent a government from passing laws that increase the punishment for failure to comply with a subpoena to produce an encryption key.

Strong cryptography does not prevent law enforcement from carrying out a court order to install software onto a suspect’s computer that will intercept the encryption key without the cooperation of the suspect.

Strong cryptography does absolutely nothing to prevent the exploitation of the thousands of different vulnerabilities and avenues of attack that break “secure” systems, including ones using “Military-grade, 256-bit AES encryption,” every single day.

How to go about doing any of these things, or whether to do them at all, is the subject of much discussion, but it’s also beside my point, which is this: We don’t need to break the core of everything we all use every day to combat crime. Most people, including criminals, don’t know how to use cryptography in a way that resists the active efforts of a law enforcement agency, and this whole discussion doesn’t apply to the ones who do, because they know about the one-time pad.

We’re still getting better at making secure operating systems and software, but we can never reach our goal if the core of all our software is rotten. Yes, strong cryptography is a tough nut to crack—but it has to be, otherwise our information isn’t protected.

Problems with Cyber-Attack Attribution

Things were easy back when dusting for prints and reviewing security camera footage was enough to find out who stole your stuff. The world of cyber isn’t so simple, for a few reasons:

Everything is accessible to a billion blurred-out faces

The Internet puts the knowledge of the world within reach of a large portion of its inhabitants. It also puts critical infrastructure and corporate networks within the reach of attackers from all over the world. No plane ticket or physical altercation is necessary to rob and sabotage even high-profile entities.

Keeping your face out of sight of the security cameras that are now commonplace in most cities around the world whilst managing to avoid arousing suspicion requires significant finesse. It’s likely the most difficult aspect of any physical crime in a public space. But if you look at the virtual equivalents of cameras and cautious bystanders, intrusion detection/SIEM systems and operations staff, you quickly realize that they monitor information about devices, not people. A system log which shows that a user “John” logged on to the corporate VPN at 2:42 AM on a Saturday may appear at first glance to indicate that John logged on to the corporate VPN at 2:42 AM on a Saturday, but what it actually shows is that one of John’s devices, or another one entirely, did so. John may be fast asleep. We (hopefully) have very little information about that.

When digital forensics teams are sifting through the debris after a cyberattack, this is what they find (if they find anything.) They don’t have the luxury of weeding out a grainy picture of a face that can be authenticated by examining official records or verifying with someone who knows the suspect.

The Internet stinks

Imagine if you could take your pick from any random passerby in the street, assume control of their body, and use it to carry out your crime from the safety and comfort of your living room. If the poor sap gets caught, they might exclaim that they have no idea what happened, and that they weren’t conscious of what they were doing, but to authorities the case is an open-and-shut one: It’s all right there on the camera footage, clear as day. And even if they were willing to believe this lunatic’s story, they know that random crimes (where the perpetrator has no connection to the victim) are nearly impossible to solve, and since they’d be embarking down a rabbit hole by entertaining more complex possibilities, it’s easier to just keep it simple.

In cyber, this strange hypothetical isn’t strange at all. It’s the norm. Very few attackers use their own devices to carry out crimes directly. They use other people’s compromised machines (e.g. botnet zombies), anonymization networks, and more. It’s virtually impossible to prove beyond a reasonable doubt that a person whose device or IP address has been connected to a crime was therefore complicit in it. All it takes is one link in a cleverly-crafted phishing email, or a Word attachment that triggers remote code execution, and John’s device now belongs to somebody whose politics differ greatly from his.

A forensic expert may find that John’s device was remote-controlled from another device located in Germany. Rudimentary analysis would lead to the conclusion that the real perpetrator is thus German. But what really happened is a layer has been pulled off an onion that may have hundreds of layers. Who’s to say our German friend Emma, the owner of the other device, is any more conscious of what it’s been doing than John was of his? It’s very difficult to know just how stinky this onion is based on a purely technical analysis.

It’s not just like in the movies; it’s worse.

Planting evidence is child’s play

It’s very difficult for me to appear as someone else on security footage, but it’s trivial to write a piece of malware that appears to have been designed by anyone, anywhere in the world. Digital false flag operations have virtually no barriers to entry.

Malicious code containing an English sentence with a structure that’s common for Chinese speakers may indicate that the author of the code is Chinese, or it may mean nothing more than someone wants you to think the author is Chinese. Malicious code that contains traces of American English, German, Spanish, Chinese, Korean and Japanese, but not Italian, is interesting, but ultimately gives the same false certainty.

But let’s say you know exactly who wrote the code. How do you know it’s not just being used by somebody else who may be wholly unaffiliated with the author?

Any technical person can be a criminal mastermind online

I worry about the future because any cyberattack of medium-or-higher sophistication will be near-impossible to trace, and we seem reluctant to even look beneath the surface (where things appear clear-cut) today, preferring instead to keep things simple. That an IP address isn’t easily linkable to an individual may be straightforward to technical readers, but it is less so to lawmakers and prosecutors. People are being convicted, on a regular basis, of crimes that are proven using the first one or two layers of the onion (“IP address X was used in this attack, and we know you’ve also used this IP address,”) and we seem to be satisfied with this.

Go up to the most competent hacker you know, and ask them how they’d go about figuring out who’s behind an IP address, or how you can distinguish between actions performed by a user and ones performed by malicious code on the user’s device, and they are likely to shrug their shoulders and say, “That’s pretty tricky,” or launch into an improv seminar on onion routing, mix networks and chipTAN. Yet we are willing to accept as facts the findings of individuals in the justice system who in many cases have performed only a simple analysis of the proverbial onion.

(Don’t get me wrong: Digital forensics professionals often do a fine job, but I’m willing to bet they are a lot less certain in the conclusions derived from their findings than the prosecutors presenting them and the presiding judges are.)

We have to be more careful in our approach to digital forensics if we want to avoid causing incidents more destructive than the ones we’re investigating, and if we want to ensure we’re putting the right people behind bars. If we can figure out who was behind a sophisticated attack in only a few days, there is a very real possibility we are being misled.

Technical details are important, but it’s only when we can couple them with flesh-and-blood witnesses, physical events, and a clear motive that we can reach anything resembling certainty when it comes to attribution in cyberspace.